Advertisement

Enterprise AI deployment faces a fundamental tension: organisations need sophisticated language models but baulk at the infrastructure costs and energy consumption of frontier systems.

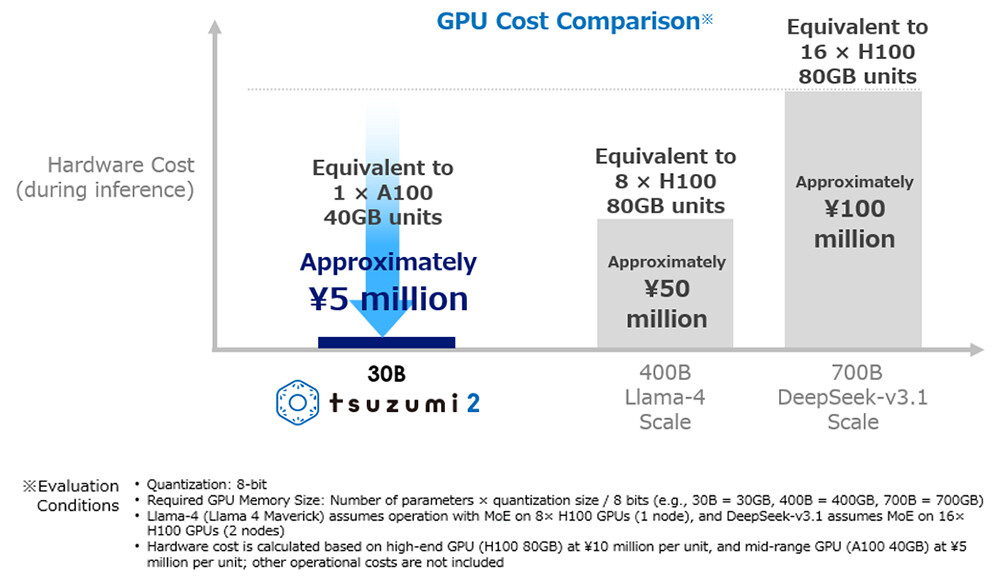

NTT’s recent launch of tsuzumi 2, a lightweight large language model (LLM) running on a single GPU, demonstrates how businesses are resolving this constraint – with early deployments showing performance matching larger models and running at a fraction of the operational cost.

The business case is straightforward. Traditional large language models require dozens or hundreds of GPUs, creating electricity consumption and operational cost barriers that make AI deployment impractical for many organisations.

For enterprises operating in markets with constrained power infrastructure or tight operational budgets, these requirements eliminate AI as a viable option. NTT’s press release illustrates the practical considerations driving lightweight LLM adoption with Tokyo Online University’s deployment.

The university operates an on-premise platform keeping student and staff data in its campus network – a data sovereignty requirement common in educational institutions and regulated industries.

After validating that tsuzumi 2 handles complex context understanding and long-document processing at production-ready levels, the university deployed it for course Q&A enhancement, teaching material creation support, and personalised student guidance.

The single-GPU operation means the university avoids both capital expenditure for GPU clusters and ongoing electricity costs. More significantly, on-premise deployment addresses data privacy concerns that prevent many educational institutions from using cloud-based AI services that process sensitive student information.

NTT’s internal evaluation for financial-system inquiry handling showed tsuzumi 2 matching or exceeding leading external models despite dramatically smaller infrastructure requirements. The performance-to-resource ratio determines AI adoption feasibility for enterprises where the total cost of ownership drives decisions.

The model delivers what NTT characterises as “world-top results among models of comparable size” in Japanese language performance, with particular strength in business domains prioritising knowledge, analysis, instruction-following, and safety.

For enterprises operating primarily in Japanese markets, this language optimisation reduces the need to deploy larger multilingual models requiring significantly more computational resources.

Reinforced knowledge in financial, medical, and public sectors – developed based on customer demand – enables domain-specific deployments without extensive fine-tuning.

The model’s RAG (Retrieval-Augmented Generation) and fine-tuning capabilities allow efficient development of specialised applications for enterprises with proprietary knowledge bases or industry-specific terminology where generic models underperform.

Beyond cost considerations, data sovereignty drives lightweight LLM adoption in regulated industries. Organisations handling confidential information face risk exposure when processing data through external AI services subject to foreign jurisdiction.

NTT positions tsuzumi 2 as a “purely domestic model” developed from scratch in Japan, operating on-premises or in private clouds. This addresses concerns prevalent in Asia-Pacific markets about data residency, regulatory compliance, and information security.

FUJIFILM Business Innovation’s partnership with NTT DOCOMO BUSINESS demonstrates how enterprises combine lightweight models with existing data infrastructure. FUJIFILM’s REiLI technology converts unstructured corporate data – contracts, proposals, mixed text and images – into structured information.

Integrating tsuzumi 2’s generative capabilities enables advanced document analysis without transmitting sensitive corporate information to external AI providers. This architectural approach – combining lightweight models with on-premise data processing – represents a practical enterprise AI strategy balancing capability requirements with security, compliance, and cost constraints.

tsuzumi 2 includes built-in multimodal support handling text, images, and voice in enterprise applications. Thematters for business workflows requiring AI to process multiple data types without deploying separate specialised models.

Manufacturing quality control, customer service operations, and document processing workflows typically involve text, images, and sometimes voice inputs. Single models handling all three reduce integration complexity compared to managing multiple specialised systems with different operational requirements.

NTT’s lightweight approach contrasts with hyperscaler strategies emphasising massive models with broad capabilities. For enterprises with substantial AI budgets and advanced technical teams, frontier models from OpenAI, Anthropic, and Google provide cutting-edge performance.

However, this approach excludes organisations lacking these resources – a significant portion of the enterprise market, particularly in Asia-Pacific regions with varying infrastructure quality. Regional considerations matter.

Power reliability, internet connectivity, data centre availability, and regulatory frameworks vary significantly in markets. Lightweight models enabling on-premise deployment accommodate these variations better than approaches requiring consistent cloud infrastructure access.

Organisations evaluating lightweight LLM deployment should consider several factors:

Domain specialisation: tsuzumi 2’s reinforced knowledge in financial, medical, and public sectors addresses specific domains, but organisations in other industries should evaluate whether available domain knowledge meets their requirements.

Language considerations: Optimisation for Japanese language processing benefits Japanese-market operations but may not suit multilingual enterprises requiring consistent cross-language performance.

Integration complexity: On-premise deployment requires internal technical capabilities for installation, maintenance, and updates. Organisations lacking these capabilities may find cloud-based alternatives operationally simpler despite higher costs.

Performance tradeoffs: While tsuzumi 2 matches larger models in specific domains, frontier models may outperform in edge cases or novel applications. Organisations should evaluate whether domain-specific performance suffices or whether broader capabilities justify higher infrastructure costs.

NTT’s tsuzumi 2 deployment demonstrates that sophisticated AI implementation doesn’t require hyperscale infrastructure – at least for organisations whose requirements align with lightweight model capabilities. Early enterprise adoptions show practical business value: reduced operational costs, improved data sovereignty, and production-ready performance for specific domains.

As enterprises navigate AI adoption, the tension between capability requirements and operational constraints increasingly drives demand for efficient, specialised solutions rather than general-purpose systems requiring extensive infrastructure.

For organisations evaluating AI deployment strategies, the question isn’t whether lightweight models are “better” than frontier systems – it’s whether they’re sufficient for specific business requirements while addressing cost, security, and operational constraints that make alternative approaches impractical.

The answer, as Tokyo Online University and FUJIFILM Business Innovation deployments demonstrate, is increasingly yes.

See also: How Levi Strauss is using AI for its DTC-first business model

Want to learn more about AI and big data from industry leaders? Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is part of TechEx and co-located with other leading technology events. Click here for more information.

AI News is powered by TechForge Media. Explore other upcoming enterprise technology events and webinars here.

Google has rolled out Private AI Compute, a new cloud-based processing system designed to bring the privacy of on-device AI to the cloud. The platform aims to give users faster, more capable AI experiences without compromising data security. It combines Google’s most advanced Gemini models with strict privacy safeguards, reflecting the company’s ongoing effort to make AI both powerful and responsible.

Advertisement

If you’ve ever thought companies talk more than act when it comes to their AI strategy, a new Cisco report backs you up. It turns out that just 13 percent globally are actually prepared for the AI revolution.

For all the progress in artificial intelligence, most video security systems still fail at recognising context in real-world conditions. The majority of cameras can capture real-time footage, but struggle to interpret it. This is a problem turning into a growing concern for smart city designers, manufacturers and schools, each of which may depend on AI to keep people and property safe.

Adopting AI at scale can be difficult. Enterprises around the world are discovering the pace of AI deployment is frustratingly slow as they face implementation, integration, and customisation challenges. Generative AI is undoubtedly powerful, but it can be complex, particularly for businesses starting from scratch.

The AI adoption in China has reached unprecedented levels, with the country’s generative artificial intelligence user base doubling to 515 million in just six months, according to a report released by the China Internet Network Information Centre (CNNIC).